-

-

Notifications

You must be signed in to change notification settings - Fork 3

Home

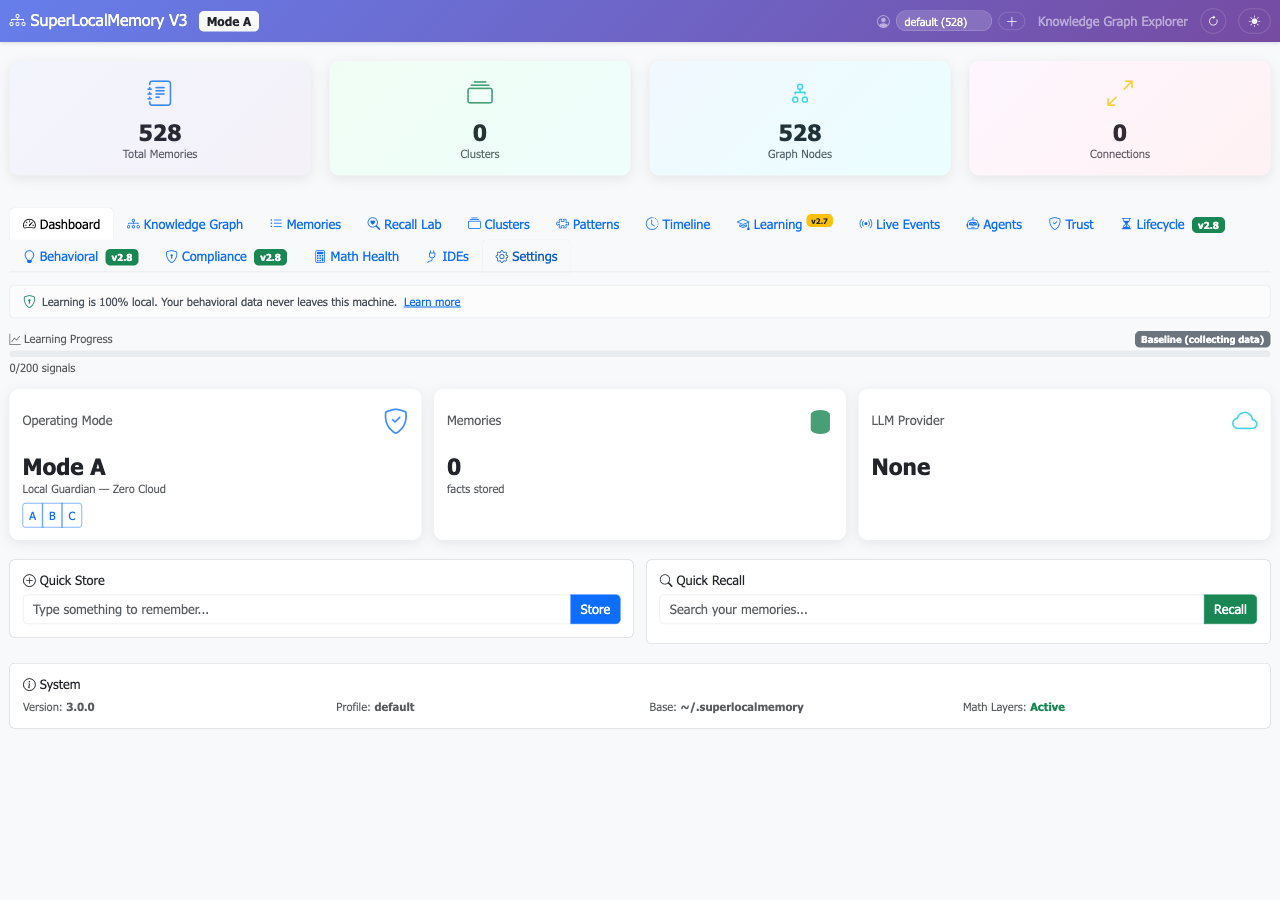

The first local-only AI memory to break 74% retrieval on LoCoMo. No cloud. No APIs. No data leaves your machine.

SuperLocalMemory gives AI assistants persistent memory across sessions. Install once, and your AI remembers your projects, preferences, decisions, and debugging history — forever.

SLM now forms connections between memories, surfaces them automatically, detects contradictions, and consolidates knowledge during idle time. Four new capabilities: multi-signal auto-invoke, SYNAPSE spreading activation (5th retrieval channel), bi-temporal contradiction detection, and sleep-time consolidation with Core Memory blocks. Zero breaking changes — every feature is opt-in. Read more →

SLM now learns from your usage patterns and gets smarter over time — at zero token cost. Every recall generates learning signals. After 20+ signals, the system starts optimizing retrieval for YOUR specific patterns. After 200+, a full ML model trains on your data. No other memory system learns without spending LLM tokens. Read more →

npm install -g superlocalmemory # or: pip install superlocalmemory

slm setup # Choose mode A/B/C

slm warmup # Pre-download embedding model (optional)That's it. Your AI now remembers you.

| Mode | What It Does | Cloud Required |

|---|---|---|

| A: Local Guardian | Zero cloud. Your data never leaves your machine. EU AI Act compliant. 74.8% on LoCoMo. | No |

| B: Smart Local | Local LLM via Ollama for answer synthesis. Still fully private. | No |

| C: Full Power | Cloud LLM for maximum accuracy (87.7% on LoCoMo). | Yes |

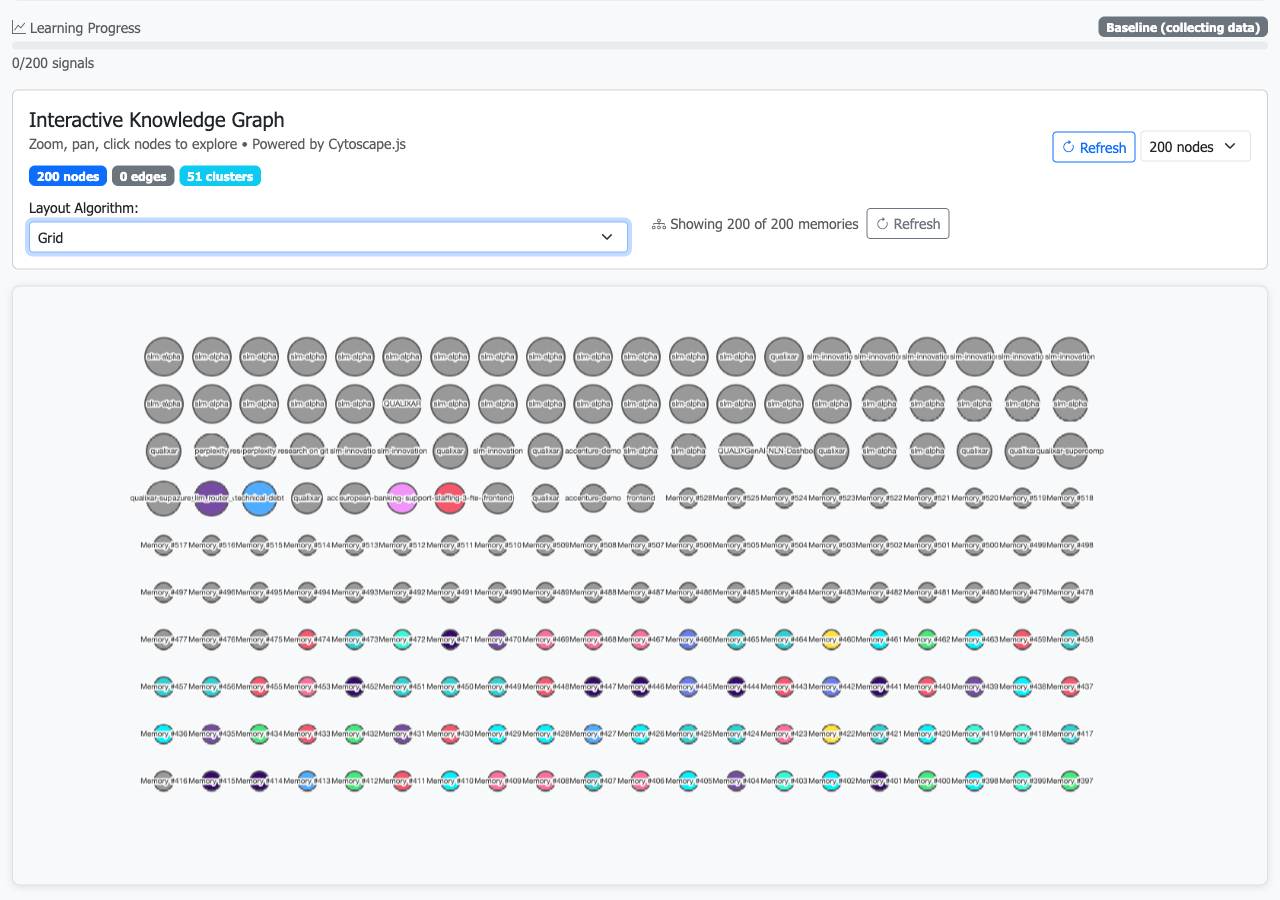

V3 Dashboard Screenshots

|

|

|

- Works in 17+ IDEs — Claude Code, Cursor, VS Code, Windsurf, Gemini CLI, JetBrains, and more

-

Dual Interface: MCP + CLI — MCP for IDEs, agent-native CLI (

--json) for scripts, CI/CD, agent frameworks - 4-channel retrieval — Semantic + keyword + entity graph + temporal for maximum accuracy

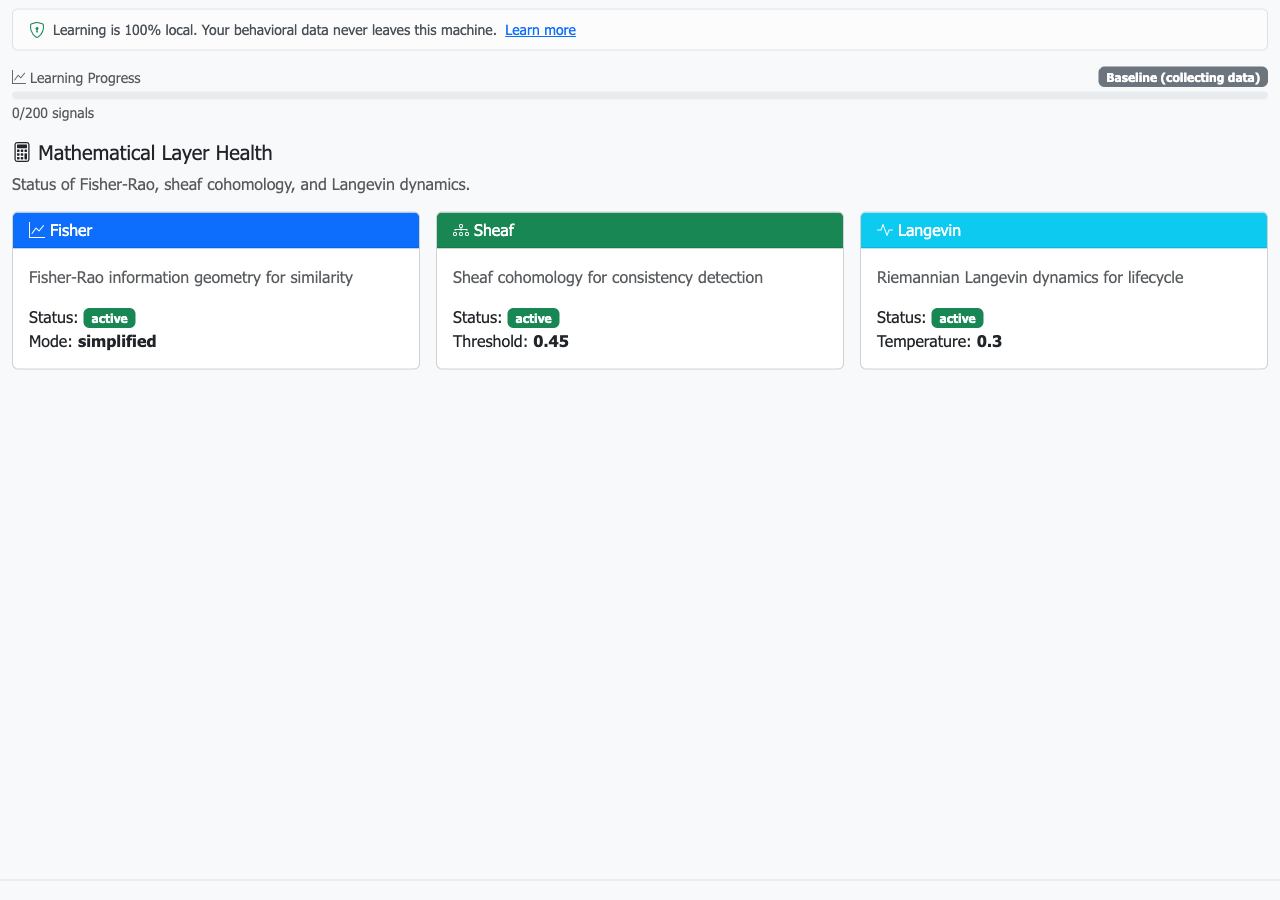

- Mathematical foundations — Fisher-Rao similarity, sheaf consistency, Langevin lifecycle

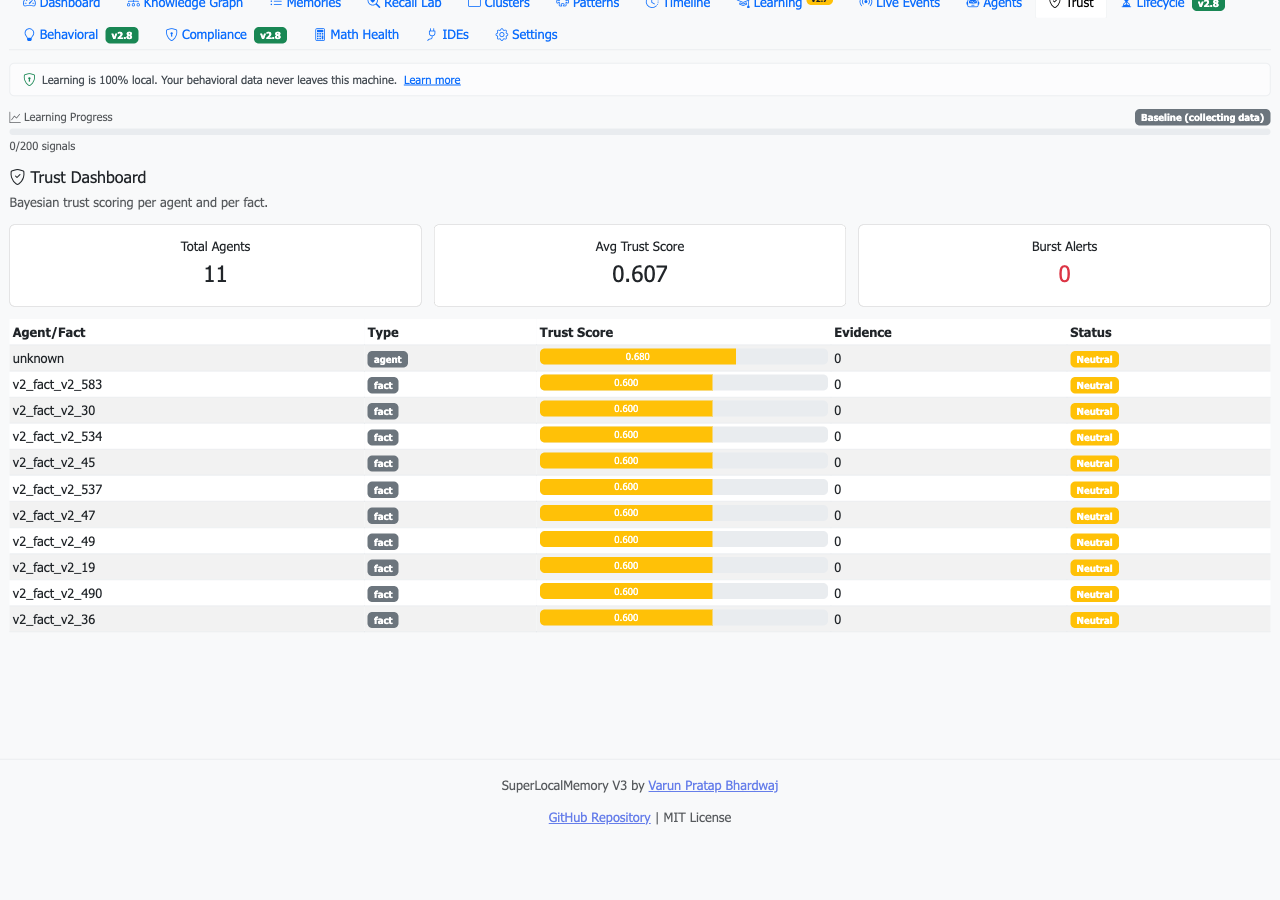

- Trust scoring — Bayesian trust per agent and per fact

- EU AI Act compliant — Mode A satisfies data sovereignty by architecture

- 85% open-domain — highest of any system evaluated, including cloud-powered ones

- 1400+ tests — production-grade reliability

- Multi-profile — isolated memory contexts for work, personal, clients

| Page | What You'll Learn |

|---|---|

| V3.2 Overview | All v3.2 features, feature matrix by mode, migration guide |

| Auto-Invoke | Multi-signal scoring, FOK gating, ACT-R mode, contextual descriptions |

| Association Graph | SYNAPSE spreading activation, auto-linking, Hebbian strengthening |

| Temporal Intelligence | Bi-temporal validity, contradiction detection, historical queries |

| Consolidation | Core Memory blocks, 6-step consolidation cycle, triggers |

| Page | What You'll Learn |

|---|---|

| Installation | Full install guide — npm, pip, git clone |

| Quick Start Tutorial | Step-by-step for new users and V2 upgraders |

| Getting Started | Install + first memory in 5 minutes |

| Modes Explained | A vs B vs C — which is right for you |

| CLI Reference | All 18 slm commands with --json docs |

| MCP Tools | All 24 MCP tools for IDE integration |

| IDE Setup | Per-IDE configuration guide |

| Migration from V2 | Upgrade guide for existing users |

| Auto-Memory | How auto-capture and auto-recall work |

| Architecture Overview | How the system works |

| Mathematical Foundations | The math behind the memory |

| Compliance | EU AI Act, GDPR, retention policies |

| FAQ | Common questions answered |

- V3 Paper: Information-Geometric Foundations for Agent Memory (arXiv) | Zenodo

- V2 Paper: Privacy-Preserving Multi-Agent Memory (arXiv)

Part of Qualixar | Created by Varun Pratap Bhardwaj

SuperLocalMemory V3 — Your AI Finally Remembers You. 100% local. 100% private. 100% free.

Part of Qualixar | Created by Varun Pratap Bhardwaj | GitHub

SuperLocalMemory V3

Getting Started

Reference

Architecture

Enterprise

V2 Documentation